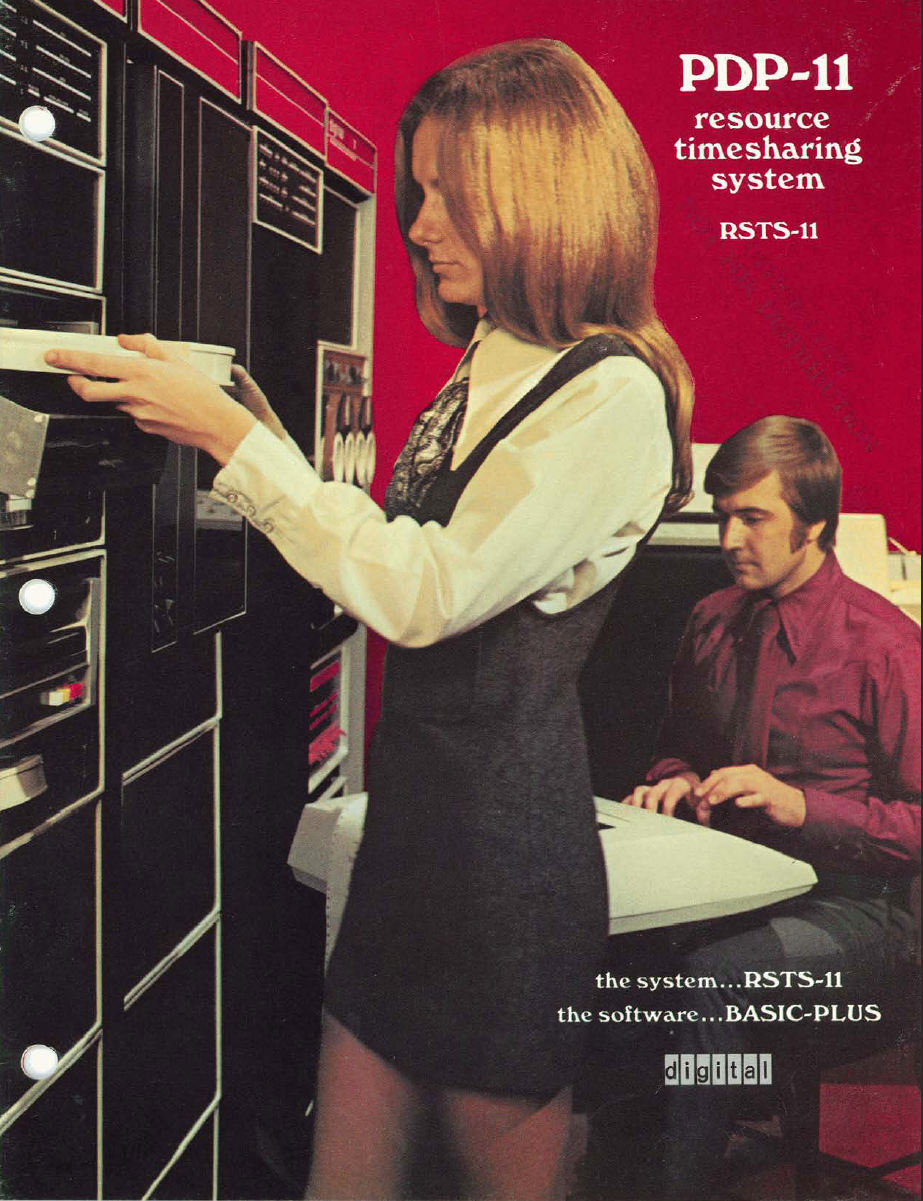

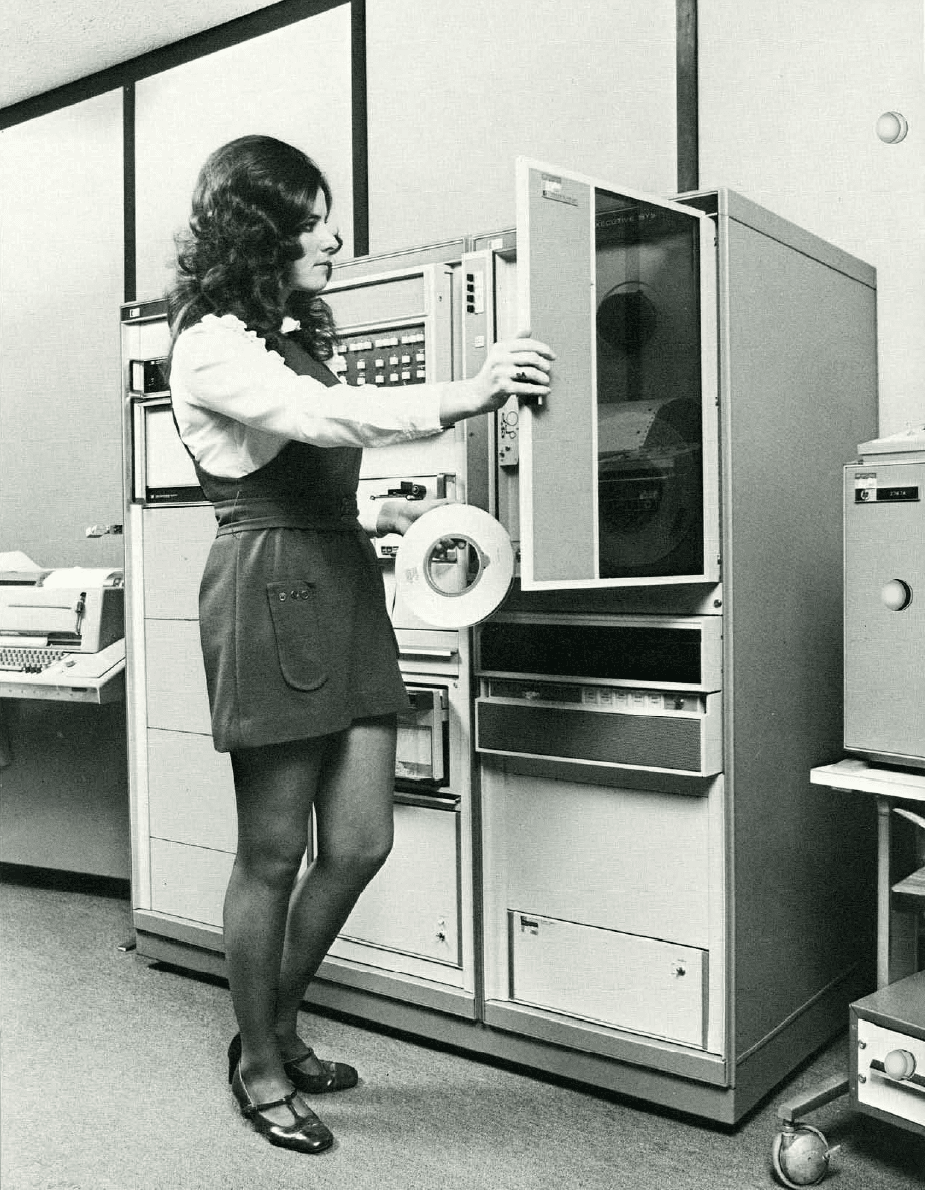

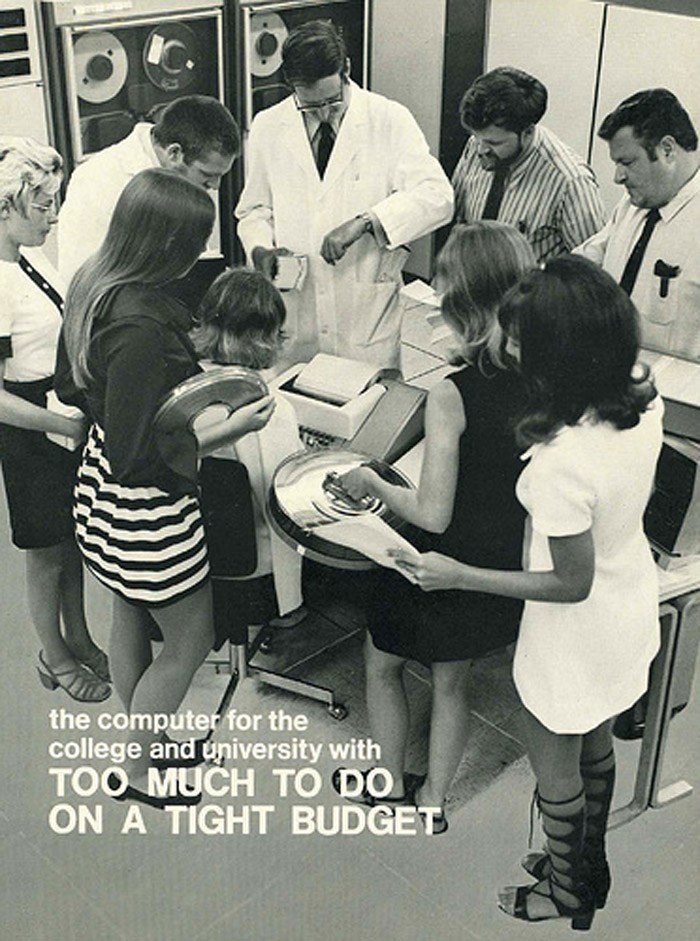

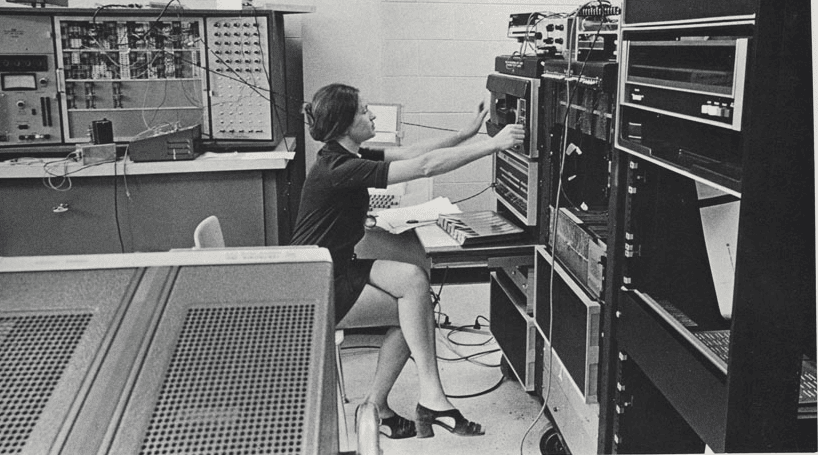

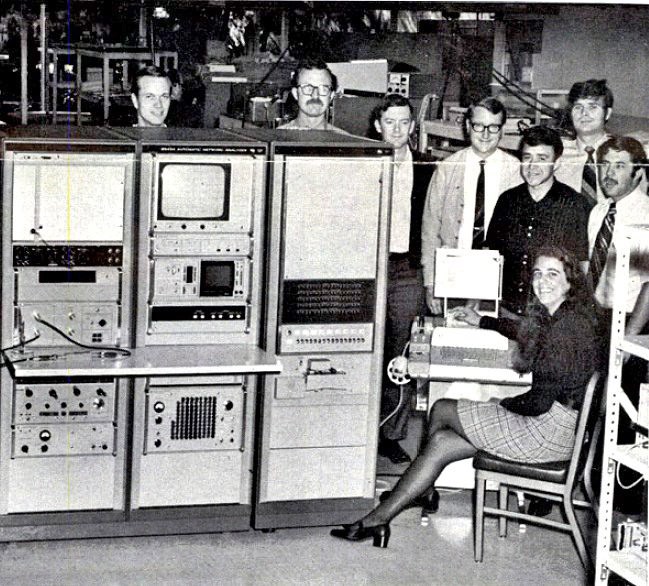

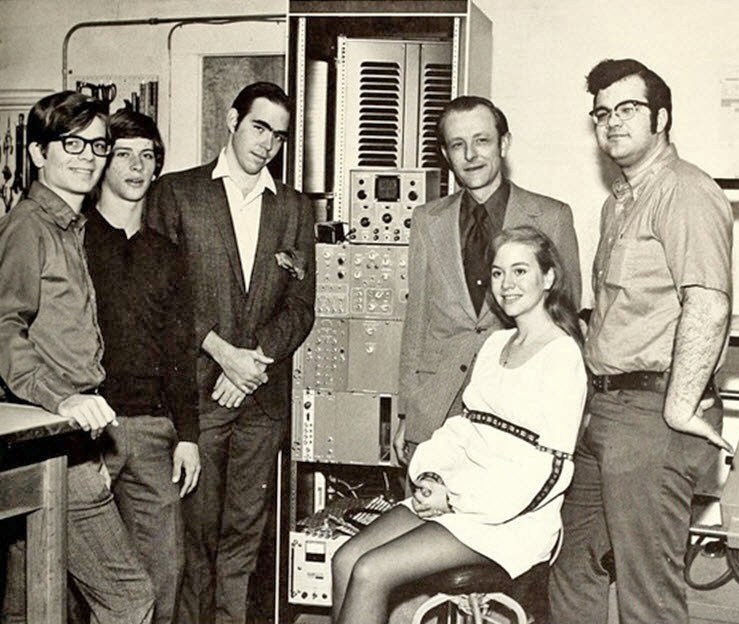

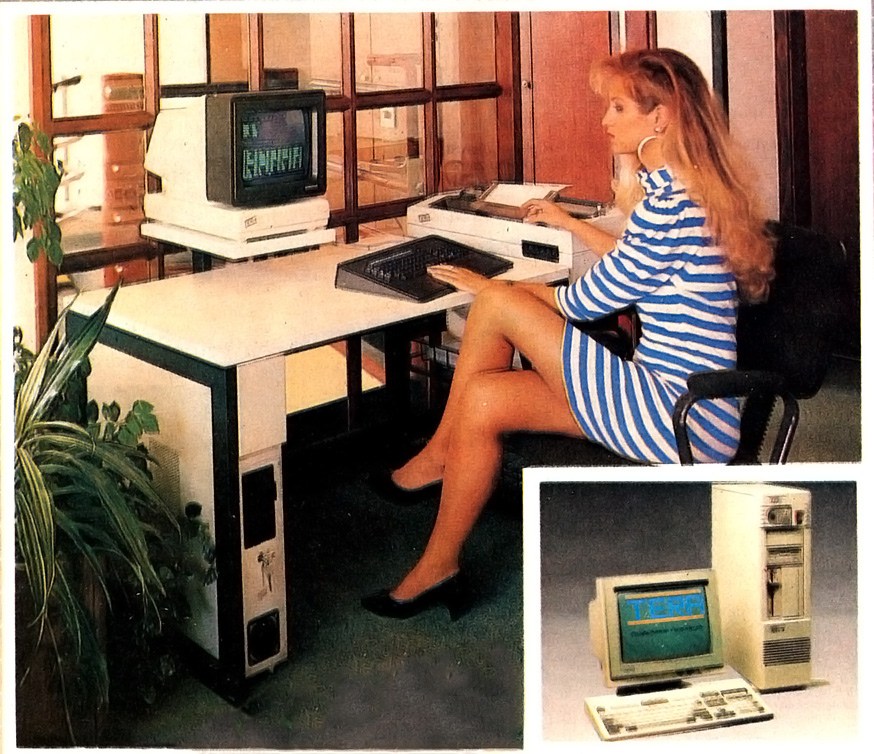

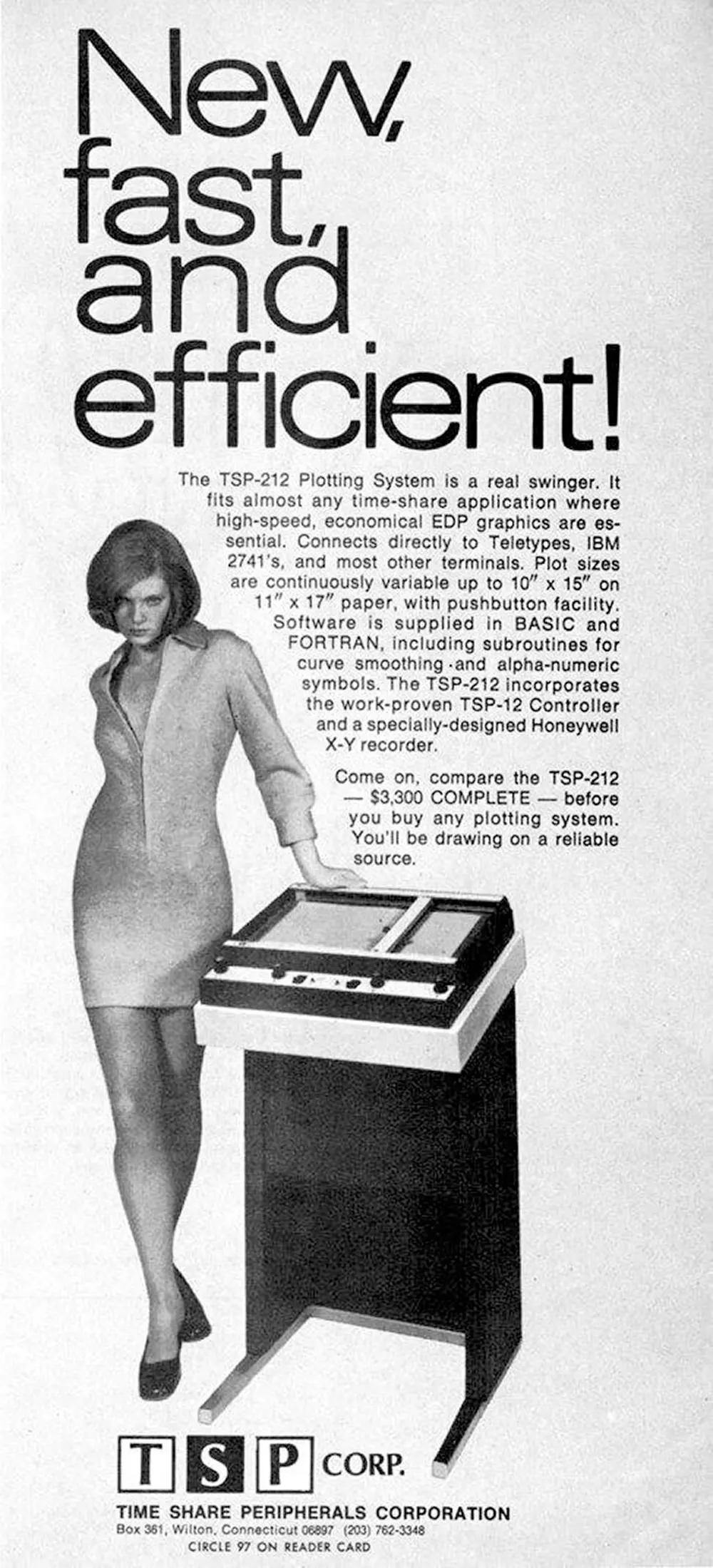

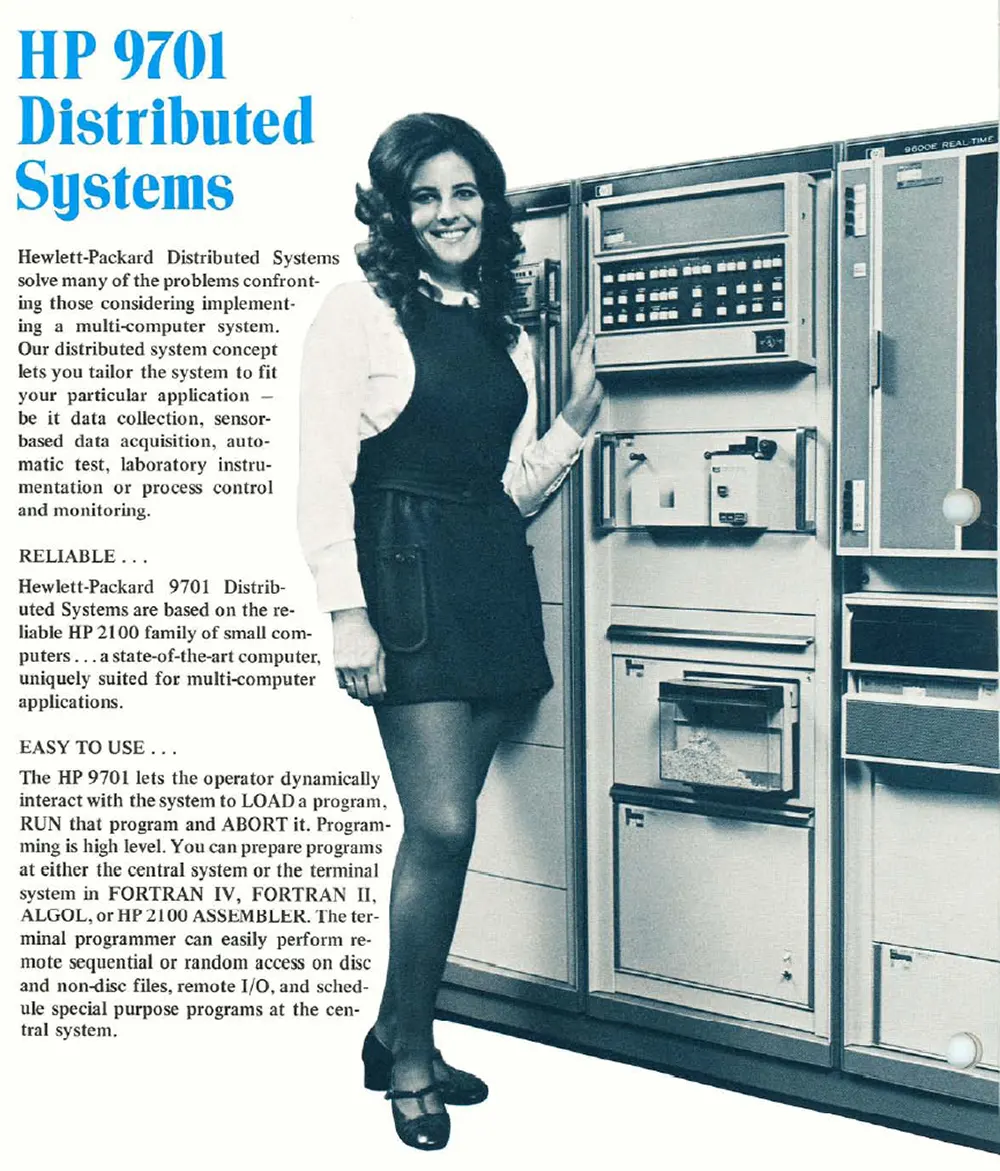

The notion that sex sells tech was exploited to grotesque ends during the era of the early gigantic computer systems.

The notion that sex sells tech was exploited to grotesque ends during the era of the early gigantic computer systems.

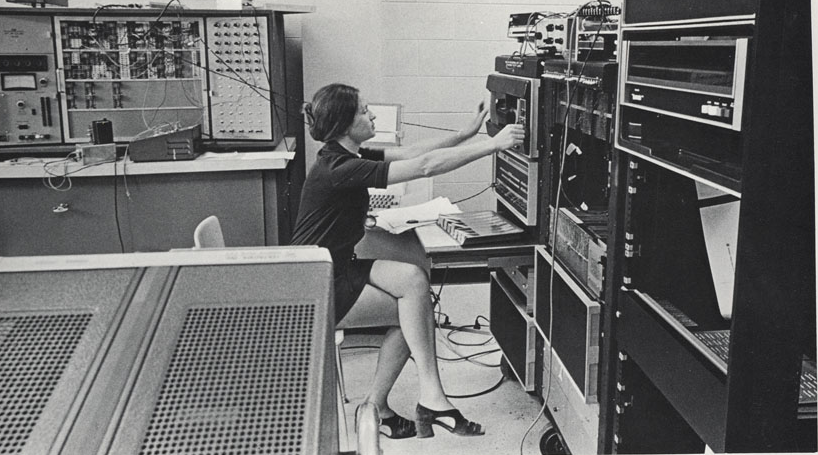

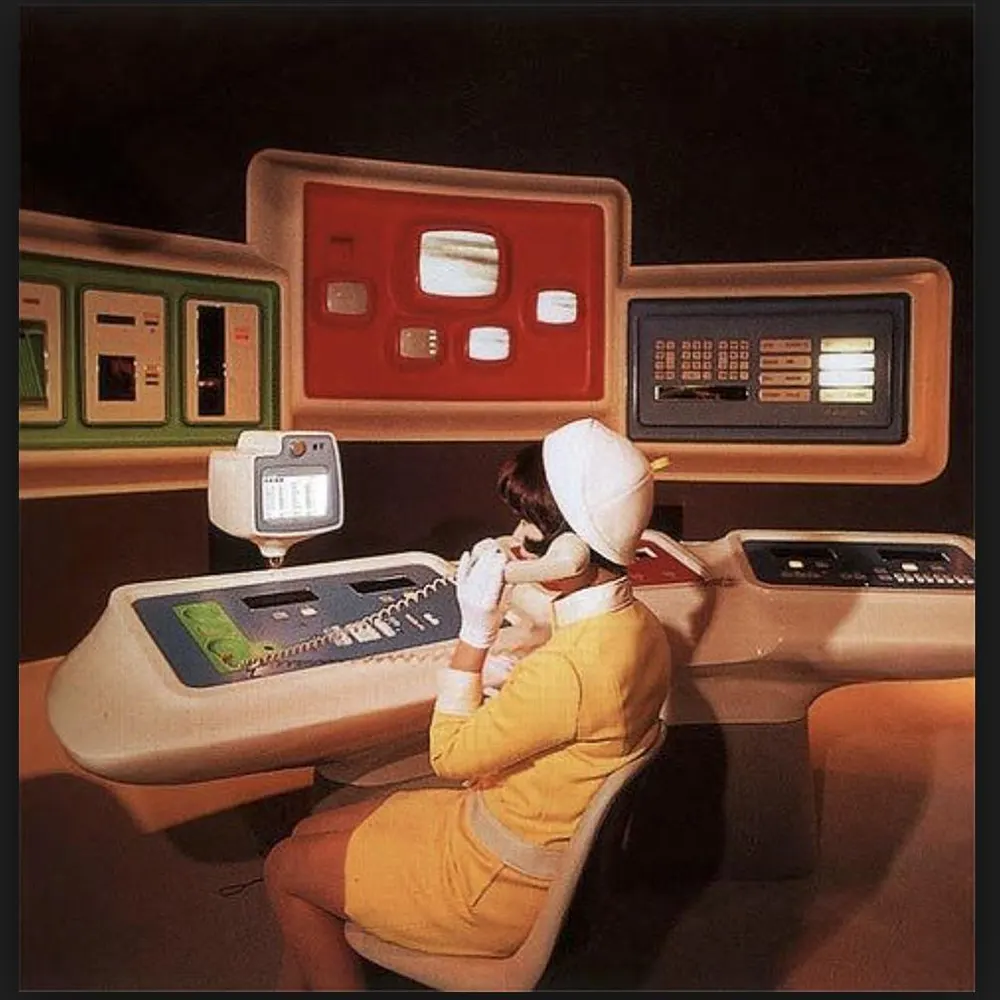

These vintage pictures scanned from old magazines and newspapers of those bygone decades, depict attractive women wearing as little clothing as the decency of the day allowed promoting and advertising computers.

Technological innovations such as the diminishing cost of hardware and increasing computer miniaturization created new markets for computers during the 1970s.

Small offices could take advantage of low-cost computers or gain access to mainframes through time-sharing services.

The increase in the use of computers was accompanied by major technical, organizational, and social change at the workplace, which caused existing business processes to be largely adapted to electronic data processing, resulting in rapid growth in requirements for new work qualifications in offices and in manufacturing.

Companies first began to work with computer systems at the beginning of the 1960s. These systems were installed at computer centers.

The operating costs of such a computer center were so high that the systems had to run day and night to be profitable.

It was only large companies that generated such large volumes of data. Accordingly, it was large banks and insurance companies that first set up computer centers.

A small number of highly respected computer specialists worked there – programmers who wrote the software and operators who ran the systems.

In 1975, the microcomputer was introduced into the small business sector. Because of microcomputer technology, small businesses were able to compete with large corporate entities by having the ability to analyze business data.

The earliest application of computers relied on their advantages over humans in repeatedly following simple instructions – for example, tabulating census results.

Over time, computer hardware became dramatically more capable and advances in software, such as word processors, spreadsheets, and email, made it easier for workers to access these capabilities.

The 1980s, however, saw the victory of an idea that only a few visionaries had dared to entertain: they brought the computer to every desk – the personal computer, or PC.

When IBM finally brought out its first PC in 1981, people complained about its MS-DOS operating system, which was considered to be the second choice.

However, customers held Big Blue’s reputation to be more important than the state of technology in the product, and made the IBM PC the standard for office applications.

The operating system of the Apple Macintosh, however, was revolutionary in 1984. It brought icons, windows and mice to desktops but the Mac did not sell well until an application – desktop publishing – appeared for which the Mac was more suited than any other computer.

This boom was, of course, fostered by advances in semiconductor technology. Moore’s Law illustrates the dynamism of this industry. He predicted that the power and complexity of computer chips would double, and their price halve, every 18 months.

This made it possible to run applications that required ever-increasing computing power. Soon, PCs were processing still and moving pictures as well as sound.

They became of interest to artists but, above all, gave rise to a huge market for entertainment products.

In the computer industry, which originally developed for military customers, it is the producers of popular computer games that now set the tempo.

PCs were cheap, but there was still a market for more powerful desktop computers made to meet the requirements placed by engineers and architects on computer-aided design rather than for office or home users.

As in the case of PCs, these first workstations, as these computers were called in order to distinguish them from PCs, came from a newcomer to the scene – Sun Microsystems based in Silicon Valley.

(Photo credit: Boing Boing / HNF.de / Wikimedia Commons).